Robots are Dumb

The history of Army C2 and why the mass proliferation of autonomous systems on the battlefield makes this time different

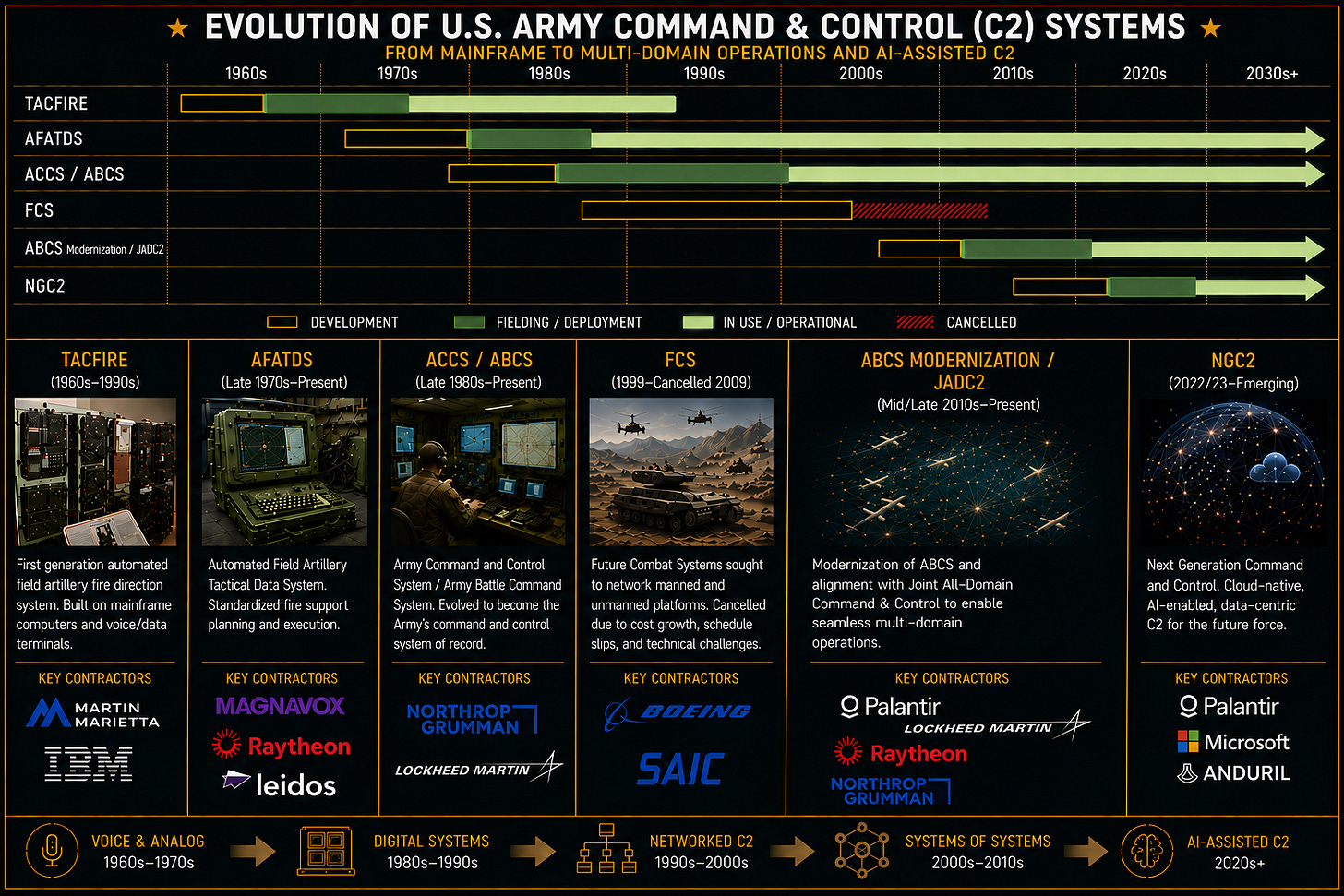

The first field exercises just kicked off for the $4 Billion Next Generation Command & Control (NGC2) program. Know as Ivy Sting, Ivy Mass and culminating in Project Convergence later this year, this effort seeks to deliver what has been the dream of army planners for over 60 years: a unified command and control system that’s able to seamlessly connect commander, sensors and shooters in a robust kill web that provides unparalleled situational awareness and a dramatically compressed kill chain. CX2 is presently participating in Ivy Mass and it’s a front row seat to how this system is taking shape.

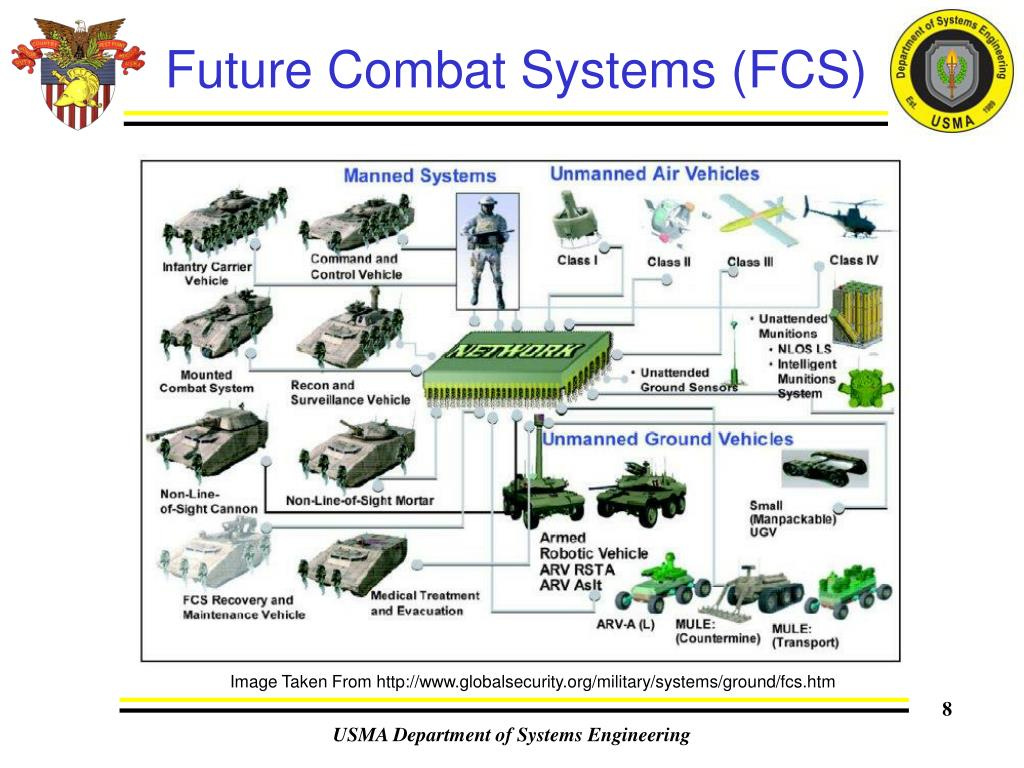

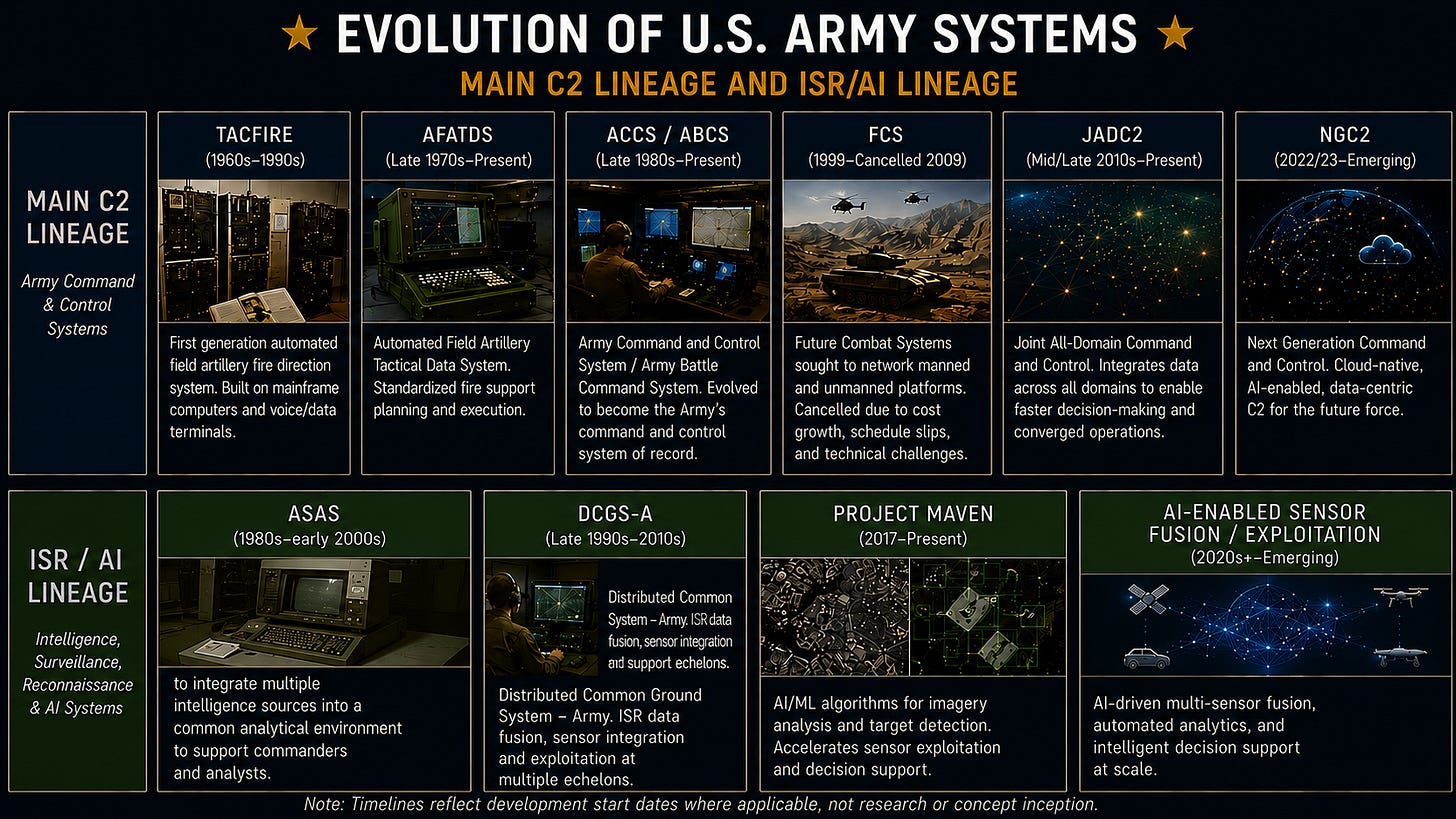

This vision of unified command & control is nothing new for the Army: it’s been tried before again and again and again — with mixed results. The last attempt, Future Combat Systems in the early 2000s, lit $18 billion on fire (and even more if we include the cancelled TSAT program that was to be the space comm layer) with little to show for it other than a boost to Boeing and SAIC’s share price.

In this piece, I’ll go over the history of the Army’s C2 efforts along with how it grappled with the introduction of new tech like GPS. I’ll focus primarily on the ground-centric systems here, since air defense systems like FAAD, CRAMC2 and IFPC are an entirely different solution set. Finally I’ll talk about what’s different and what we might learn from it.

What’s different this time?

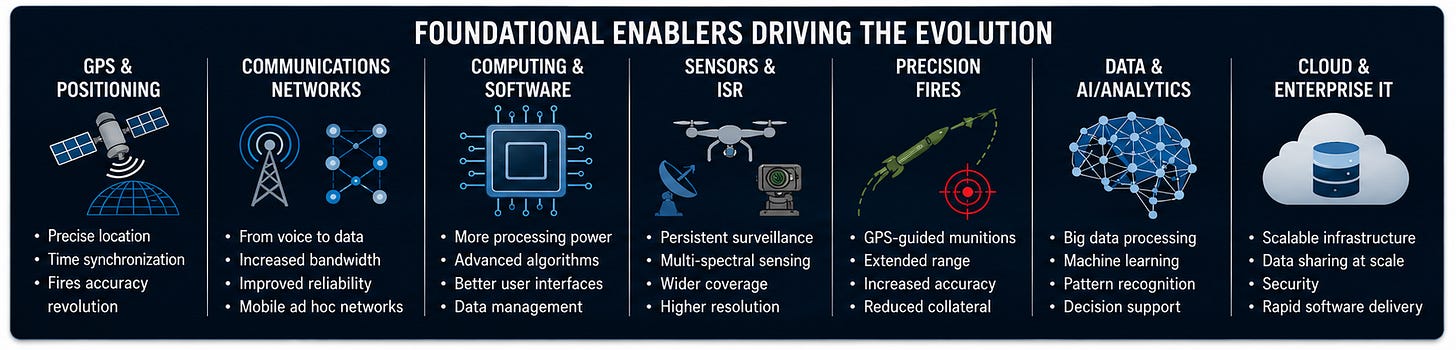

Since FCS was cancelled, commercial technology has evolved quite a bit. So has the acquisition model - with new players not dependent on cost plus business models entering the field like Palantir and Anduril. Networking and wireless data management technology and software that was exquisite 20 years ago has become commercialized and ubiquitous. Computing power is 1000x cheaper today then then.

The proliferation of autonomous systems on the battlefield is becoming a forcing function for the Army to finally resolve a problem it has been struggling with since the TACFIRE era of the 1970s: fragmented command-and-control architectures that cannot coherently integrate sensors, shooters, autonomy, and decision-making at scale.

A force operating hundreds or thousands of unmanned aerial systems, loitering munitions, autonomous ground vehicles, and AI-enabled sensors cannot function through stove-piped architectures and manually synchronized workflows.

Earlier generations of battle command systems assumed relatively finite inputs—human observers, discrete ISR feeds, bounded networks. Autonomous systems fundamentally alter that equation because they are not merely platforms; they are persistent producers and consumers of data. A force operating hundreds or thousands of unmanned aerial systems, loitering munitions, autonomous ground vehicles, and AI-enabled sensors cannot function through stove-piped architectures and manually synchronized workflows. The scale alone breaks the model. Pentagon planners increasingly discuss autonomous systems not in the hundreds, but in the tens or even hundreds of thousands—a scale shift reflected in the Replicator initiative’s push to field large numbers of low-cost autonomous platforms within an 18-to-24 month window. What emerges is a totally different environment with AI enabled machines continuously generating targets, telemetry, classifications, positional updates, electronic signatures, and probabilistic assessments faster than humans can manually reconcile them.

In that environment, integrated C2 stops being an aspirational modernization goal and becomes a prerequisite for operational survival. Systems like ATAK, Anduril’s Lattice, Maven, and emerging NGC2 architectures are all attempts to address this reality by transforming command-and-control from a collection of discrete systems into a continuously adaptive data environment. The battlefield itself begins to resemble a distributed software ecosystem in which autonomous systems are both participants in the network and drivers of its evolution. In a sense, autonomy is forcing the Army to confront something it has deferred for decades: command-and-control can no longer be organized around platforms and programs. It must be organized around data, orchestration, and machine-speed coordination in environments where disruption, degradation, and information overload are assumed from the outset.

Integrated Army C2 has always been just out of reach

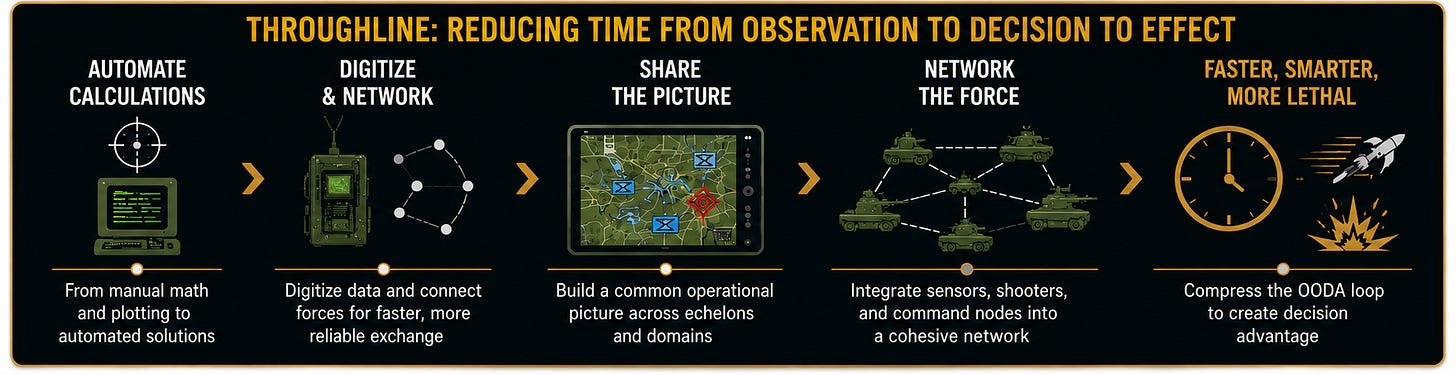

What’s striking, looking back across sixty years of Army command-and-control systems, is not how much has changed—but how persistent the core ambition has been. From TACFIRE to NGC2, the throughline is almost embarrassingly simple: compress time, reduce uncertainty, and move information faster than the enemy can react. Everything else—hardware, doctrine, buzzwords—is scaffolding around that singular obsession.

Origins

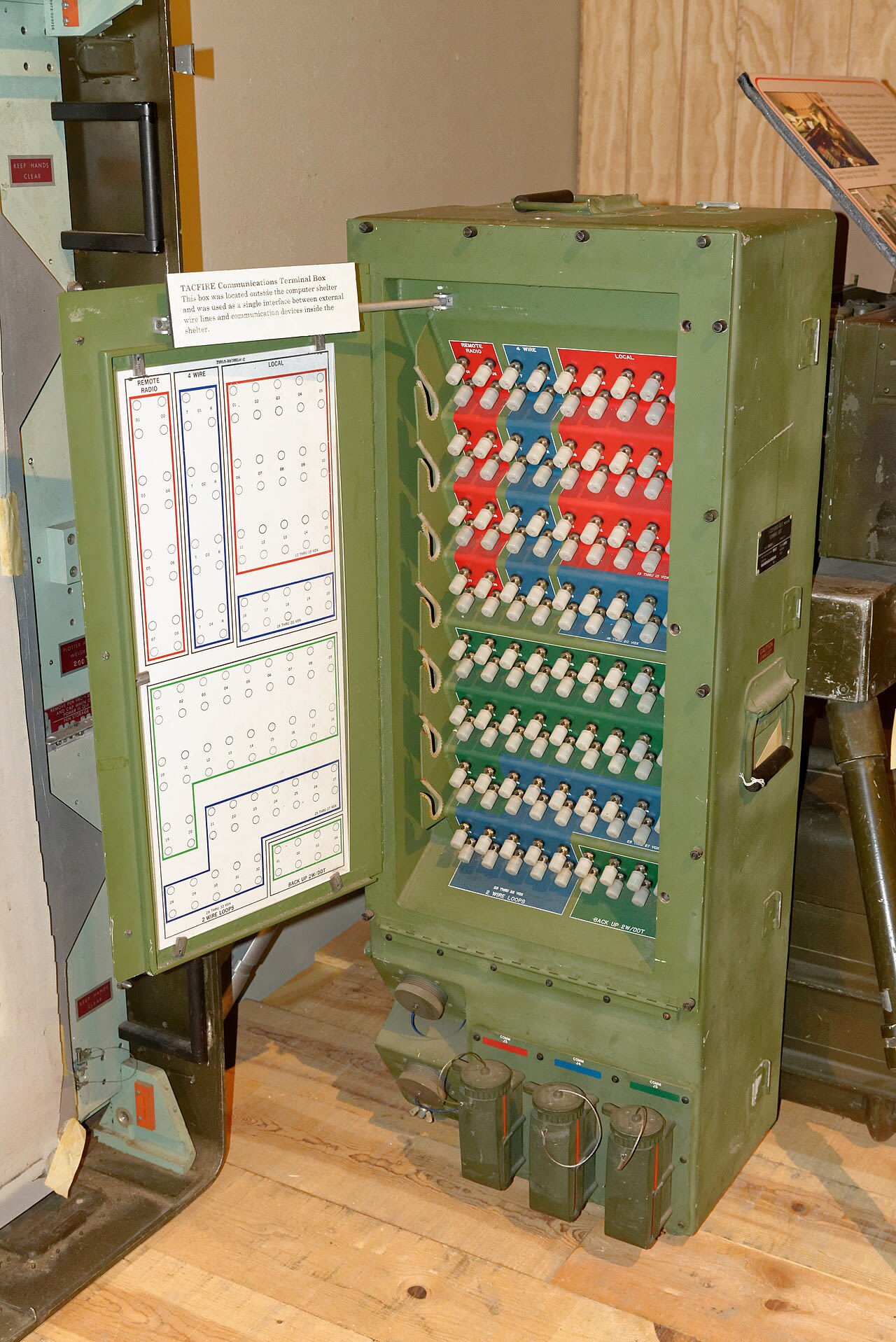

TACFIRE, born in the 1960s, feels primitive now, but it represented a quiet revolution. Before it, artillery was an exercise in disciplined friction: forward observers calling in targets, fire direction centers calculating trajectories by hand, guns firing on delayed instructions. TACFIRE didn’t eliminate the humans; it reorganized them around a machine that could think faster. It was less about automation than about tempo. If you could shave minutes down to seconds, you could reshape the battlefield. That was the wager.

But TACFIRE’s scope was narrow. It solved the artillery fire control problem (partially) without pretending to solve the war. And in that constraint, there was a kind of clarity. The system knew what it was for.

The decades that followed are, in some sense, a story of losing that clarity.

Introduction of Fully Digital Tools - and stovepipes

By the 1980s and into the Gulf War era, the Army began assembling a constellation of systems: AFATDS (Advanced Field Artillery Tactical Data System) for fires, MCS (Maneuver Control System) for maneuver, ASAS (All Source Analysis System) for intelligence and Blue Force Tracker (BFT). Each system did something TACFIRE-like within its own domain, but the ambition had expanded. No longer was the goal simply to accelerate a function; it was to integrate functions. Commanders didn’t just want faster artillery—they wanted a coherent picture of the battlefield.

GPS and the PNT revolution

Enter GPS stage left. Starting in the late 1970s early GPS—still imperfect, still intermittently available—started to replace the messy, human process of map reading, terrain association, and grid estimation. It didn’t fully solve the problem, but it changed its character. Uncertainty was no longer something you managed; it was something you expected the system to eliminate.

The First Gulf War exposed both the potential and the limits. GPS came into its own here—not yet ubiquitous, but decisive enough to shift how forces moved and fired. Units navigated with a confidence that would have been unthinkable a decade prior. Fires became more precise not just because the math improved, but because the inputs did. The battlefield began to flatten into coordinates.

But even then, there were hints of fragility. GPS worked because it was uncontested. It was a gift of the environment, not a guarantee of it.

The Army Battle Command Systems (ABCS) of the 1990s and 2000s represented a more explicit attempt to build a “system of systems.” Blue Force Tracker, CPOF, DCGS-A— another brick defining the battlefield. For the first time, commanders could see friendly forces in near real time, collaborate across distances, and plan with digital tools instead of acetate overlays.

If TACFIRE was about speed, ABCS was about visibility, and visibility was increasingly anchored in GPS. Blue Force Tracker did not just display units—it located them with a precision that made the map itself feel alive. AFATDS, now matured from its TACFIRE lineage, could assume that firing units, targets, and observers were all operating on a shared spatial truth. The battlefield wasn’t just visible; it was synchronized.

This was the quiet revolution of PNT: not just knowing where you are, but ensuring that everyone else knows it too, in the same frame of reference, at the same moment in time. But this synchronization came with a hidden cost. Systems began to assume GPS as a constant—like gravity. Workflows were built around it. Decision-making accelerated because position was treated as solved.

By the early 2000s, the Army had, in effect, built a command-and-control architecture that depended on GPS without fully acknowledging the dependency. SAASM, a Selective Availability feature added to the GPS constellation that encrypted its signals, increasing robustness to jamming while denying GPS to the enemy, had been allowed to grow stale. Meanwhile the much more robust M-code encryption set - powered by programs like OCX (cancelled this year), GPS Block III and MGUE - had over a decade of deployment delays due to procurement malpractice - resulting in about a 6-10 year gap between when customers were directed to transition from SAASM to M-Code based receivers being fully functional. Further compounding the problem was that our allies became increasingly dependent on GPS but were at the back of the line to receive these new receivers and crypto keys required for them to function.

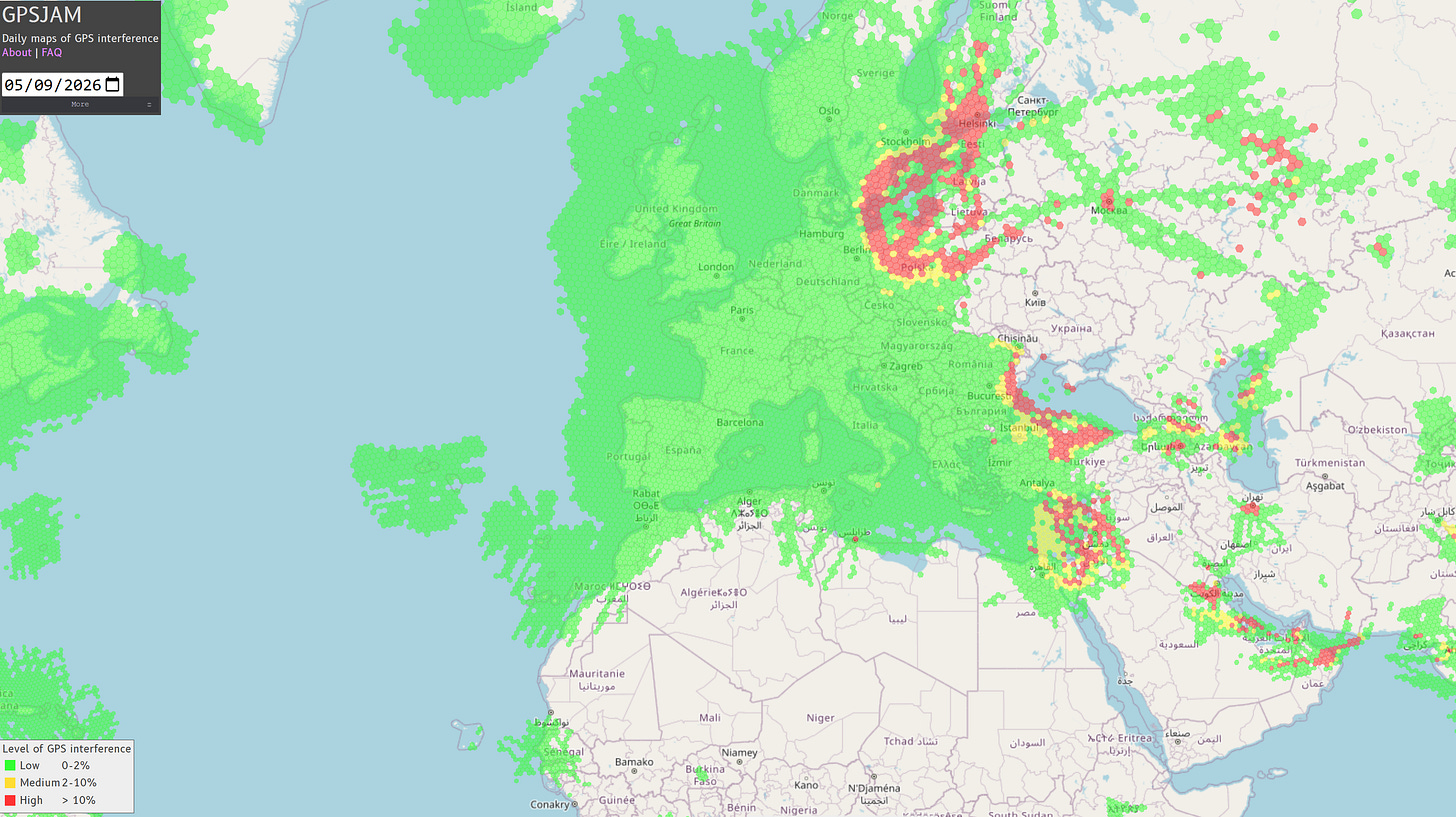

GPS-Denied

Russia’s use of GPS jamming and spoofing—first in localized experiments, then more visibly in Syria, and ultimately at scale in Ukraine—forced a confrontation with something the architecture had quietly avoided: what happens when position is no longer trustworthy? Overnight the accuracy rate for GPS guided artillery shells and HIMARS in Ukraine went from better than 50% to less than 10% overnight. The Ukrainians stopped using the Excalibur shells entirely - something that could have easily been avoided if M-code had been available and lived up to expectations.

Today GPS jamming is so ubiquitous there is literally a website where it is tracked worldwide that is updated on a daily basis. In GPS-denied or degraded environments, the entire edifice of digital command and control begins to wobble. The battlefield, once neatly mapped, reverts to ambiguity.

A generation of systems had been built on the premise of persistent, accurate PNT. When that premise failed, there was no easy fallback. The response has been both evolutionary and reactive.

Efforts like M-code represent an attempt to harden GPS itself. Other efforts like CRPA antennas aim to make the GPS receivers themselves more robust. But hardening GPS is only part of the solution. Contrast that with contemporary approaches which are attempting to uncouple from GPS. Systems like DAPS attempt to provide alternative sources of positioning—integrating inertial navigation, signals of opportunity, and other techniques to maintain awareness even when GPS is degraded or denied. UAS are increasingly regressing to older techniques such as Terrain Following Navigation and some companies like Q-CTRL are even looking at gravity techniques to unshackle us from GPS. The goal is not perfect precision, but robustness and survivability.

In other words, the system is learning that uncertainty cannot be eliminated, only managed.

An exercise in procurement malpractice

Future Combat Systems (FCS), launched in the early 2000s, was the Army’s most ambitious attempt to reimagine command and control as a fully networked system-of-systems. Sensors, shooters, vehicles, radios, and unmanned platforms would all exist within a unified architecture, sharing data continuously across the force. Information superiority would become the decisive advantage. In retrospect, FCS reads less like a failed program than an early preview of ideas that would later reappear under different names: distributed sensors, network-centric warfare, integrated battle command, and persistent connectivity. But FCS attempted to realize all of these concepts simultaneously, binding software, hardware, and doctrine into a single tightly coupled system whose complexity ultimately exceeded the Army’s ability to field it. What it underestimated was not technology, but integration itself. The network that was supposed to simplify warfare became too complex to stabilize long enough to survive.

By the time the program was canceled in 2009 (fun fact: the program manager for FCS was none other than former Boeing CEO Dennis Muilenberg), it had become clear that the problem was not simply execution. It was architectural. FCS treated command and control as something that could be designed in advance, specified in detail, and delivered as a finished product. But the battlefield—and the technologies shaping it—were evolving too quickly for that model to hold.

In that sense, FCS failed in a way that was ultimately instructive. It revealed the limits of monolithic design, and the risks of trying to impose coherence through centralization. The lessons would linger, even as the program itself disappeared.

And they would resurface, years later, in a very different approach.

ISR Management - a Parallel line of effort that folds in

ASAS emerged during the final years of the Cold War as the Army confronted a new kind of problem: not a shortage of information, but an excess of it. Signals intelligence, imagery, battlefield reporting, and human sources all arrived through separate systems and were interpreted locally, making coherence difficult even when the data existed. ASAS attempted to reduce that friction by pulling disparate streams into a common analytical environment, though the architecture remained workstation-centric, analyst-driven, and fundamentally linear. Intelligence still moved in stages—collection, analysis, dissemination—but ASAS marked the beginning of the Army treating intelligence less as a series of reports and more as a continuously accumulating data environment.

After 9/11, DCGS-A inherited this architecture but attempted to scale it into permanence. The wars in Iraq and Afghanistan transformed ISR from episodic collection into continuous observation: full-motion video, drone surveillance, biometrics, geospatial data, signals intelligence. DCGS-A sought to unify this expanding sensor ecosystem into a persistent operational framework, but in doing so became emblematic of a deeper tension between traditional defense integration models and the emerging software-centric conception of battlefield intelligence. The question was no longer whether data could be collected. It was whether humans could remain cognitively synchronized with the scale of its accumulation. Around this same time, Palantir also emerged as a popular choice for analysts on the ground in theater - but not the procurement folks responsible for buying it. This famously led to Palantir suing the government in 2014 - and winning for a change to open up DCGS to commercial solutions rather than just awarding it to Raytheon. They later went on to win a major part of this contract.

Maven represented a different answer to the same problem. Where ASAS organized intelligence and DCGS federated it, Maven sought to compress perception itself. Machine learning systems were trained not merely to store ISR, but to identify patterns within it—to reduce the burden of attention. The center of gravity shifted from databases to inference. Yet Maven’s early trajectory revealed how unstable this transition would be. Google’s withdrawal from the program in 2018 briefly interrupted what many inside the Pentagon had assumed was an inevitable convergence between Silicon Valley and defense (interestingly enough this same event was a key catalyst for the creation of DIU and the founding of Anduril). In the aftermath, the Joint AI Center (JAIC )emerged to institutionalize military AI development across the department, later evolving into the CDAO as AI became less a niche analytical capability than an organizing layer for modern command-and-control itself. Systems like Anduril’s Lattice, ATAK, and emerging NGC2 architectures extend this logic further still: sensors, autonomous systems, operators, and algorithms increasingly exist within the same operational loop. The battlefield no longer merely collects information. It persistently observes, prioritizes, and recommends—continuously shaping human attention in real time.

Bottoms up C2

Between the sprawling institutional architectures of DCGS-A and the emerging AI-enabled ecosystems of NGC2, systems like ATAK introduced a different model entirely. The Android Tactical Assault Kit emerged not from the logic of centralized enterprise integration, but from operational immediacy. Lightweight, mobile, and rapidly extensible, ATAK pushed situational awareness and sensor integration downward—from command posts to individual operators and small units. What made ATAK significant was not simply its interface, but its architecture. Unlike earlier battle command systems built around fixed workflows and tightly controlled integration pipelines, ATAK functioned more like a tactical software platform: modular, API-driven, and adaptable to rapidly changing operational needs. Over time it became a convergence layer for mapping, ISR feeds, targeting data, Blue Force Tracking, unmanned systems, and edge-device communications across both conventional and special operations forces. In many ways, ATAK represented the military’s first widely successful realization that battlefield software could evolve more like a commercial ecosystem than a traditional defense program. The system foreshadowed much of what would later define the NGC2 era: distributed interfaces, edge computing, real-time collaboration, and a battlefield architecture in which the user is no longer merely consuming information, but continuously participating in the network itself.

Systems like Anduril’s Lattice extend the logic of ATAK further still. Lattice is not simply an ISR display layer; it is an orchestration environment in which sensors, autonomous systems, operators, and AI models exist within the same operational loop. The boundary between collection, analysis, and action becomes increasingly porous.

Emerging NGC2 architectures reflect this same direction of travel. In addition to Anduril’s Lattice, Lockheed Martin’s work within the Army’s NGC2 prototyping efforts points toward a future in which command-and-control itself becomes modular, cloud-native, and continuously adaptive across contested environments. At the same time, newer entrants such as Picogrid working in the same ecosystem suggest a parallel shift toward lightweight, software-defined integration layers capable of rapidly connecting heterogeneous sensors, autonomous systems, and edge devices without the burden of traditional defense architectures. We are seeing the emergence of an ecosystem that is able to take several architecturally different approaches and try them out to see what works best. Like ATAK this is top down rather than bottoms up. A welcome change from the era of top down, requirements heavy C2 systems like FCS.

The trajectory from ASAS to NGC2 is therefore not merely a story about better software or larger datasets. It is the gradual construction of a battlefield architecture in which cognition itself becomes distributed across networks, algorithms, and machines and which is much more tolerant of federated and ad hoc systems.

Melt and repour

NGC2—Next-Generation Command and Control—is less a system than a repudiation of the old approach. It begins with a different premise: that command and control is not about platforms, but about data. Data should not live inside systems; systems should exist to manipulate shared data. It’s a subtle shift, but a profound one. Embedded within that shift is another realization: the bottleneck is no longer the movement of information, but the interpretation of it.

As GPS degrades and positional certainty erodes, knowing where something is becomes less reliable. In that ambiguity, knowing what something is—through pattern recognition, behavior, and probabilistic classification—gains importance. Maven-like systems begin to compensate for uncertainty in position with confidence in identification.

But this introduces a new tension. Earlier systems were trusted because they were legible. AI based intelligence systems like Maven operates probabilistically, often opaquely. It doesn’t just accelerate decisions—it shapes them, by determining what enters the decision space at all.

In that sense, Maven is less a component of NGC2 than a precursor to it. NGC2 assumes a world of overwhelming data and contested networks. Maven addresses the human constraint within that world: attention. It represents a shift from systems that move information to systems that shape perception. That may prove to be the more consequential transformation.

In the NGC2 vision, software becomes something closer to an ecosystem—modular, continuously updated, loosely coupled. The analogy is not military at all; it is commercial. Think less “weapons system” and more “operating system.”

In the TACFIRE era, software was inseparable from hardware. In the ABCS era, software was distributed across multiple systems. In the NGC2 vision, software becomes something closer to an ecosystem—modular, continuously updated, loosely coupled. The analogy is not military at all; it is commercial. Think less “weapons system” and more “operating system.”

This is where the language starts to change. Cloud. Edge computing. AI-enabled decision support. These are not just technological upgrades; they signal a different way of thinking about command and control. The battlefield becomes a network, and the network becomes the primary terrain.

But the ambition is familiar. Faster decisions. Better synchronization. Shorter kill chains. The difference is that the Army is now trying to achieve these goals not through centralized systems, but through distributed architectures that assume disruption as a baseline condition.

Project Convergence, CJADC2, TITAN—these initiatives are all attempts to operationalize that shift. Sensors feed data into networks, algorithms help prioritize targets, shooters receive instructions with minimal delay. These systems are increasingly designed to function even when parts of the network are degraded or absent.

Yet beneath the technical evolution, the same constraints persist. Bandwidth is limited. Networks are vulnerable. Adversaries adapt. And humans—still the ultimate decision-makers—must interpret and trust the outputs of increasingly complex systems.

Trust, in particular, is an underappreciated variable. What happens when decision support depends on fused data from degraded GPS, inertial systems drifting over time, and algorithms reconciling conflicting inputs? The system may be more resilient, but is it more legible? There is a risk that in solving the problem of vulnerability, we reintroduce the problem of comprehension.

The logic of modern warfare—multi-domain operations, contested environments, rapid decision cycles—demands something like NGC2. The question is not whether the Army will move in this direction, but how well it will manage the transition.

Boiling it all up

Looking back, the history of command and control is less a straight line than a series of oscillations. Between certainty and uncertainty. Between dependence and resilience. Between systems that assume the world is stable, and systems that are built for when it is not.

TACFIRE sat closer to one end of that spectrum: focused, constrained, comprehensible. NGC2 sits at the other: expansive, adaptive, and explicitly designed for a world where even something as fundamental as position cannot be taken for granted.

What remains constant is the underlying ambition—to see the battlefield more clearly, to act more quickly, to impose order on chaos. It is a deeply human ambition, even as it becomes increasingly mediated by machines.

Perhaps that is the real throughline. Not the systems themselves, but the belief that with the right tools, uncertainty can be tamed. That war can be made legible, if only we can process enough information, fast enough. That is the belief that has driven six decades of innovation..and one that, despite everything, remains just out of reach.

The imperative that the massive proliferation of autonomous systems on the battlefield has made enhanced C2 not just a nice to have but an absolute imperative. Robots are kind of dumb and unlike humans lack initiative. Even if we could give them initiative, we may not trust them to use it, because culturally we fear a human not being the one to push the final button. As we evolve from one v one like we see on the battlefield in Ukraine today to one v many, the necessity of robust C2 to manage our emerging army of autonomous systems may be what finally forces us to get our act together on unified C2. This more than anything I think is what will make NGC2 succeed: the fact that it cannot fail.